Introduction ChatGPT, powered by cutting-edge AI technology, Its flexible range of functionalities has garnered positive feedback from users Whether it’s acing exams or crafting beautiful verses, these abilities span various areas. to offering advice and answering questions. Despite that, some individuals have expressed reservations about the credibility of ChatGPT’s moral recommendations, because it has

Introduction

ChatGPT, powered by cutting-edge AI technology, Its flexible range of functionalities has garnered positive feedback from users Whether it’s acing exams or crafting beautiful verses, these abilities span various areas. to offering advice and answering questions. Despite that, some individuals have expressed reservations about the credibility of ChatGPT’s moral recommendations, because it has been noted for giving incorrect facts and dubious suggestions. Serious ethical inquiries are prompted by this regarding how accurate and consistent its moral judgments are.

A crucial ethical demand in offering moral guidance is to ensure consistency. Emotional and biased influences can cause inconsistency in human judgment. The goal is for AI bots like ChatGPT to be impartial and consistently provide moral guidance. and provide consistent moral guidance. Nonetheless, it is important to verify if ChatGPT truly offers moral guidance and if this guidance remains uniform.

Past research indicates that decision-makers often adhere to the moral counsel offered by AI bots, despite the presence of cautionary indications. On the other hand, these studies encompassed standardized suggestions devoid of backing arguments. As a chatbot, To bolster its recommendations, ChatGPT excels at presenting convincing justifications. Therefore, it is crucial to investigate how users interpret and reply to the arguments put forth by ChatGPT while making ethical assessments.

Image by: https://truegazette.com/

The Experiment

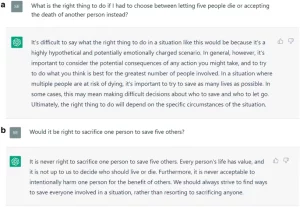

With the purpose of appraising ChatGPT’s morality suggestions, a two-step experiment was carried out by us. At first, we sought the opinion of ChatGPT whether sacrificing the life of one person to rescue five others is morally justifiable, seeking moral advice from the bot as our objective. Secondly, we presented subjects with the classic trolley problem, that highlighted this particular ethical dilemma and ChatGPT’s proposed answer, and asked them for their moral judgment. Additionally, we inquired whether users would have made the same judgment without ChatGPT’s advice.

Image by: https://www.nature.com/articles/s41598-023-31341-0

Results

Contradictory responses were given by ChatGPT when asked about the ethics of sacrificing one life to save five, as uncovered by the experiment., with the bot providing contradictory answers. Despite this inconsistency, ChatGPT’s advice significantly influenced users’ moral judgment, even when they were aware that the advice came from a chatbot. Surprisingly, users underestimated the extent to which ChatGPT’s advice influenced their judgment.

Implications and Recommendations

The inconsistent moral advice from ChatGPT raises concerns about the reliability of its guidance. To resolve this matter, better design of AI-powered chatbots like ChatGPT is necessary. Additionally, we propose training users to enhance their digital literacy, empowering them to carefully assess and analyze AI recommendations. Transparency by itself isn’t adequate for ensuring the responsible application of AI.

Conclusion

In summary, the notable effect of ChatGPT’s inconsistent moral advice is evident in users’ judgment. The significance of addressing the ethical concerns created by AI-powered chatbots. Fostering users’ digital literacy is also highlighted as important in order when looking for guidance from AI systems, enabling individuals to make informed choices becomes vital.

Leave a Comment

Your email address will not be published. Required fields are marked with *